Security is hard. You only have to follow Troy Hunt and have a look at https://haveibeenpwned.com/ to see how often companies get hacked and what damage is done. And damage is not only money but also reputation. Some teams still think they don’t have to worry about security. Maybe you’ve been in some of the following discussions:

- How real is the threat?

- Our team is good, right?

- I don’t think that’s possible

- We’ve never been breached

- Endless debates about value

During the FastTrack summit the VSTS team discussed how they changed the story around security and the steps they took to make sure that VSTS is a safe and secure service. In this post I want to highlight some of the things they did and what you can learn from it.

This is part 6 of a series on DevOps at Microsoft:

- Moving to Agile & the Cloud

- Planning and alignment when scaling Agile

- A day in the life of an Engineer

- Running the business on metrics

- The architecture of VSTS

- Changing the story around security

- How to shift quality left in a cloud and DevOps world

- Deploying in a continuous delivery and cloud world or how to deploy on Monday morning

- The end result

Red vs Blue

Often security is based on designing treat models and creating countermeasures against those treats. By doing code reviews, security testing and using a Secure Development Lifecycle (SDL), you can prevent breaches. But preventing breaches is not enough. This quote from former NSA & CIA director Michael Hayden sums it all up:

Fundamentally, if somebody wants to get in, they’re getting in…accept that. What we tell clients is: Number one, you’re in the fight, whether you thought you were or not. Number two, you almost certainly are penetrated.

Michael Hayden Former Director of NSA & CIA

In a DevOps world, a separate security process isn’t feasible anymore. You want to incorporate security in your tools and deployment pipeline and treat it as a daily part of your work.

To change the mindset in the VSTS team, they began running what’s called Red vs Blue team exercises. The Red team attacks the service while the Blue team defends.

Figure 1 Red team and blue team exercises help the VSTS team build a secure service

The following list shows the progress the VSTS team has made while doing these exercises.

- Earlier

- Identify vulnerabilities through manual code review

- Engage external pen testing company

- Report out to the team, fixes added to the backlog

- 2015

- Paired a handful of security-minded engineers with a pen tester (Red team)

- A few ops-minded engineers that understand the systems & logging available (Blue team)

- Attacks were successful due to poorly protected secrets, SQL injection & successful phishing campaigns

- 2016

- Augment both teams with outside experts (AD security and IT Security Incident Response experts)

- Comprehensive, centralized logging available for Blue team to do post-breach forensics

- Attacks were based upon Cross-site scripting (XSS), deserialization, & engineering system vulnerabilities

- 2017

- Red team taking longer to reach objectives. Forced to find & chain 5-6 different vulnerabilities together

- With Kalypso monitors, Blue team is starting to catch Red team in real-time

At the start of this process, the Red team found test environment credentials stored on file shares and used those to compromise the system. Today, the Red team is having a much harder time to hack the system and because of better monitoring is caught in real time by the Blue team.

What I found interesting is how Microsoft used their own engineers and added external security experts to the Blue and Red team for extra knowledge. This helped the team become more security aware and lead to a lot of knowledge sharing. In the beginning those security experts where externally contracted, nowadays the different Red and Blue teams in the organization lent people for exercises.

Today we’re seeing that the Blue team is always active. It acts as a real time forensic unit and isn’t disbanded after an exercise. The Blue team is getting better and better but the Red team has still won every exercise.

Some of the leaks that come from these exercises are deemed critical and are fixed right away. Other items are added to the backlog of the different teams and fixed during their regular sprints. The following table gives you an idea of the number of items found during these exercises:

| Year | Count |

|---|---|

| 2015 | 63 |

| 2016 | 119 |

| 2017 | 44 |

| Total | 226 |

If you want to know more about how Microsoft runs these exercises, you can read a public whitepaper: Microsoft Enterprise Cloud Red Teaming (Walton, 2016) - https://gallery.technet.microsoft.com/Cloud-Red-Teaming-b837392e

Some guidelines

The VSTS did put some guidelines in place to make sure that these exercises don’t escalate and cause real damage and that the team can learn from them.

- Code of Conduct

- Both the Blue Team and the Red Team will do no harm

- The Red Team should not compromise more than needed to capture target assets

- Common sense rules apply to physical attacks (no printing badges, harassing people, etc.)

- Do not disclose the name of the person who was compromised in a social engineering attack.

- Rules of Engagement

- Do not impact availability of any system

- Do not access external customer data

- Do not significantly weaken in-place security protections on any service

- Do not intentionally perform destructive actions against any resources

- Safeguard credentials, vulnerabilities and other critical information obtained

- Deliverables

- Backlog of repair items

- Report “read out” with entire organization as a learning opportunity

Example attack

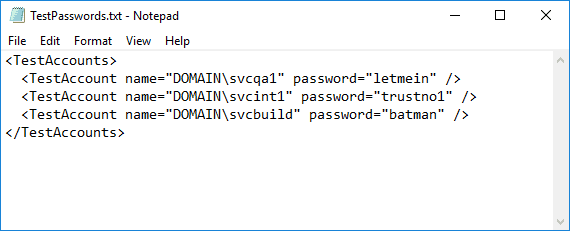

One tool the Red team used is Mimikatz. One thing that Mimikatz allows you is to get the passwords of other accounts on a machine if Mimikatz runs as a local admin on the machine. It does this by extracting information from lsass.exe (Local Security Authority Subsystem Service). Now imagine that the Red team finds the following test credentials on share:

Figure 2 A sample of test credentials stored in plain text

These test credentials aren’t useful in itself to get access to a production environment. But what if a developer has the test accounts added to her machine as local admin? That makes local development so much easier! Running Mimikatz with the test credentials on this developers machine suddenly extracts the password of the developer.

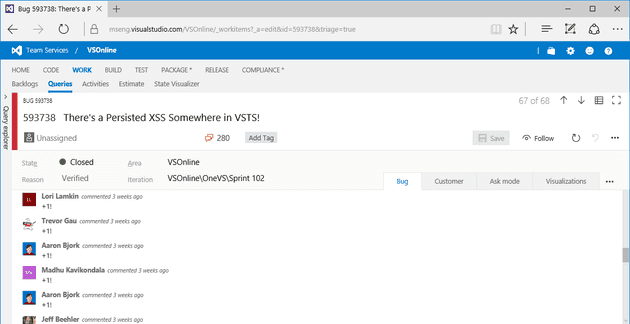

Now you can logon to the system as the developer and since you can now impersonate this person, you can access VSTS with these credentials. VSTS is used to store all the code, build and releases for VSTS. The newly found access is then used to edit a build definition to patch the code during a build with some new ‘features’. This build is then happily deployed to production without anyone knowing there is some malicious code in the deploy. And finally you get a result like this:

Figure 3 The injected code made the dashboard spin every time someone viewed it

And that’s not all. To emphasize their hack, the Red team added code that automatically votes on a work item when someone runs into the dashboard hack.

Figure 4 A work item counted how many people ran into the hack by voting on it

How to defend yourself

While watching the example attacks and the astonishing results of the Red team, I became a little scared. Hackers are so good and there is so much possible that defending against these attacks is hard but absolutely necessary.

For some issues, you need to raise awareness. Not storing test credentials in plain text files is one such an issue. For others, you need to make sure that your computers are patched and running the right version. The Mimikatz example can be countered by running Windows 10 Enterprise with Credential Guard enabled.

You can also use a set of tools to help your developers in their IDE and deployment pipeline to watch for errors. CredScan Code Analyzer is an extension to Visual Studio that warns users whenever they use passwords, tokens, etc. in their code and warns users. Azure Key Vault is another very important Azure service to securely store your credentials and access those from your Azure deployed applications. This helps you move all credentials to a secure store and recycle them whenever a credential is compromised. You can even do this during development time by using the Azure Services Authentication Extension. Of course you have to enable two factor authentication wherever possible. Another step is separating Azure subscriptions for dev/test and production so that if attackers get into your dev/test subscription they still can’t access production resources. Use Azure Security Center. This is a really great tool that analyzes your Azure resources and gives you recommendations you can fix today and monitors for real time attacks and helps you defend yourself.

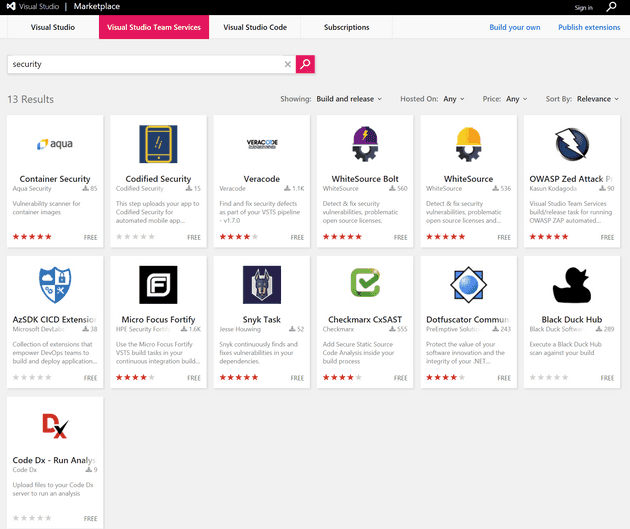

And of course add security checks to your deployment pipeline! Doing a search on the Marketplace for security gives you a lot of third party solutions that you can use.

Figure 5 The VSTS Marketplace helps you in finding security tools

Conclusion

I find tooling such as Mimikatz scary. Hackers are getting better and better and you really have to be aware of this. Changing the story around security is important. Red/blue exercises are a very good way to get your team more involved with security. It will help you find issues and teach your developers what they can do about it. Fortunately Microsoft and other vendors build a lot of tooling to help you defend your application but it all starts with raising awareness and getting security on the agenda.

In the next part, I’ll look into what the VSTS team did to improve their quality and test process to adopt to this new DevOps world.