In the wonderful world of Cloud, being a T-shaped professional is becoming more and more important. I’m a developer and my knowledge of IaaS and networking is, let’s put it gently, not so good. This week I ran into some issues when setting up a DevTest labs environment with virtual machines configured for PowerShell Remoting. In this blog post I’ll show you an easy way to configure PowerShell remoting (WinRM) on your machines and share some networking knowledge that I learned while doing that.

Some background information

I’m working on setting up a complete delivery pipeline for an application that uses an Angular frontend, a SQL Server database, SQL Server Analysis Services and SQL Server Integration Services that should run on Team Foundation Server 2017 with an on-premises deployment model. To build a demo environment of this pipeline, I use VSTS and Azure to mimic the on-premises environment.

This means I must create and manage a bunch of IaaS virtual machines on Azure. I chose to use DevTest Labs for this setup because it allows me to automatically shut down, startup and delete virtual machines based on a schedule. I can also apply artifacts to a machine to configure it the way I want before deploying my software on it. In addition, it gives me nice cost tracking features and allows me to set a maximum on the costs, so I don’t burn all my Visual Studio subscription credits.

Did you know that if you have a Visual Studio subscription, you get free credits for Azure each month that you can use to test features and learn how Azure works without spending any money? Haven’t activated this yet? Go to https://azure.microsoft.com/en-us/pricing/member-offers/credit-for-visual-studio-subscribers/ to activate your credits.

To access these virtual machines, I would love to use the new Deployment Groups option, but that’s not available yet in TFS 2017. Instead, I chose to run remote PowerShell commands on the machines to install all the parts of my application.

Applying WinRM as a DevTest Labs artifact

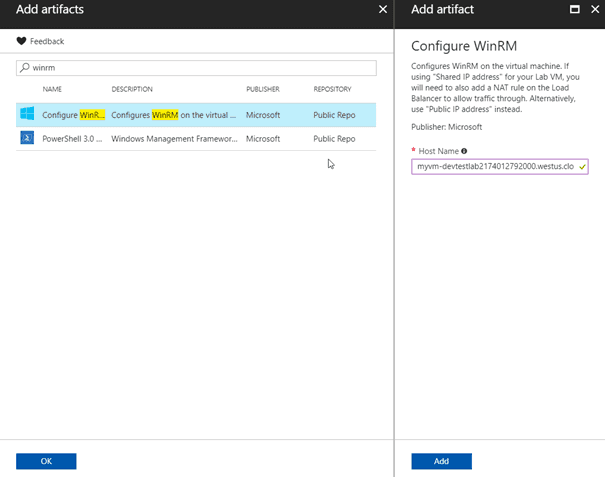

This great blog post by Tarun explains how to configure WinRM using the DevTest Labs’ Run PowerShell artifact. However, after following this post I still couldn’t access my Azure VM from my local development machine. The DevTest Labs team is great and after contacting them they created a new artifact for deploying WinRM in one go. Having this as an artifact allows DevTest Labs to give you logging on the individual steps in the script making it much easier to debug if something goes wrong.

If you apply this artifact to an existing virtual machine, it will configure WinRM for you by adding a firewall rule, creating a test certificate and configuring WinRM to listen on HTTPS.

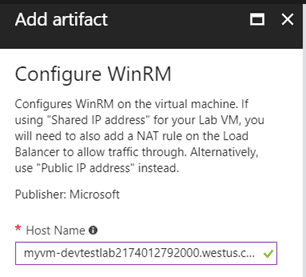

In the Add artifact blade there is now an important warning that wasn’t there before:

What’s this Shared IP address and Public IP address stuff all about?

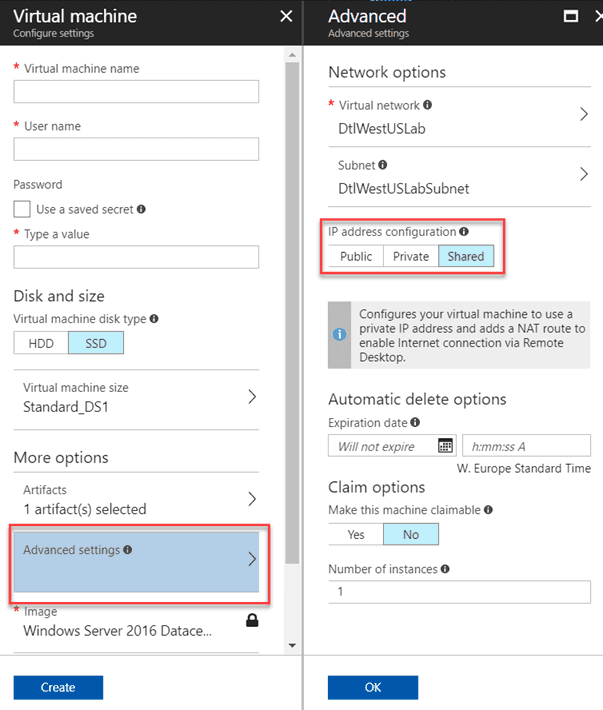

When creating a VM in DevTest Labs, go to the advanced settings to view the different IP configuration options.

By default, the configuration is set to Shared. Shared means that the VM will be publicly available under the same public IP address as other VMs in the same network, but uniquely accessed by its assigned port. Behind the scene, DevTest Labs automatically creates a load balancer that maps all incoming traffic to the different VMs in your lab. This saves costs since you must pay for each public IP address that you use. The following resources are created when you add a VM with a shared IP address to your lab:

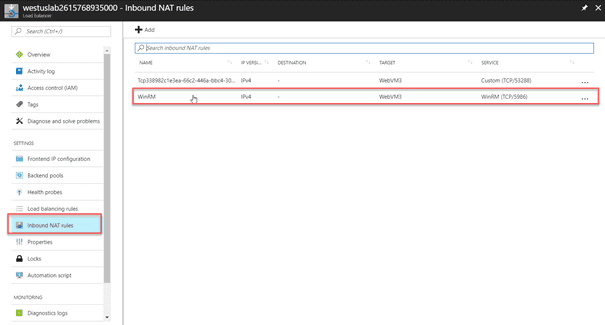

When you want to enable WinRM while using the Shared IP address, you will have to add a NAT rule to the load balancer to configure port forwarding to the WinRM port (5986) of your VM. You have to do this for every VM you create, and you need to make sure to use a unique public port each time you do this. You can use PowerShell to automate this but there is an easier solution.

When you create your virtual machine, configure it with a public IP address instead of a shared one and you’re done. This won’t create the load balancer and won’t require you to create the inbound NAT rule. Applying the WinRM artifact is now all you must do. You can then use the following script to connect to your VM:

$hostName="<machine>.westeurope.cloudapp.azure.com"

$winrmPort = "5986"

$passwd = ConvertTo-SecureString "<password> -AsPlainText -Force

$cred = New-Object System.Management.Automation.PSCredential ("<Username>", $passwd)

# Connect to the machine

$soptions = New-PSSessionOption -SkipCACheck

Enter-PSSession -ComputerName $hostName -Port $winrmPort -Credential $cred -SessionOption $soptions -UseSSL

And that’s it! Happy remoting.